Generative Engine Optimization: Engineering Citation-Grade Infrastructure for AI Search

The translation-layer thesis — with empirical proof from the world's first 100% Relevance Ratio site.

The other half of Citation Authority and Agentic Reliability.

Authors: Robert Maynard, Jr., Cofounder and CEO · Mark Garland, Cofounder and CRO

GEOlocus.ai · Phoenix, Arizona · geolocus.ai

After GEOlocus.ai translation: fully grounded — ingested in full, with reasoning budget to spare.

Executive Summary

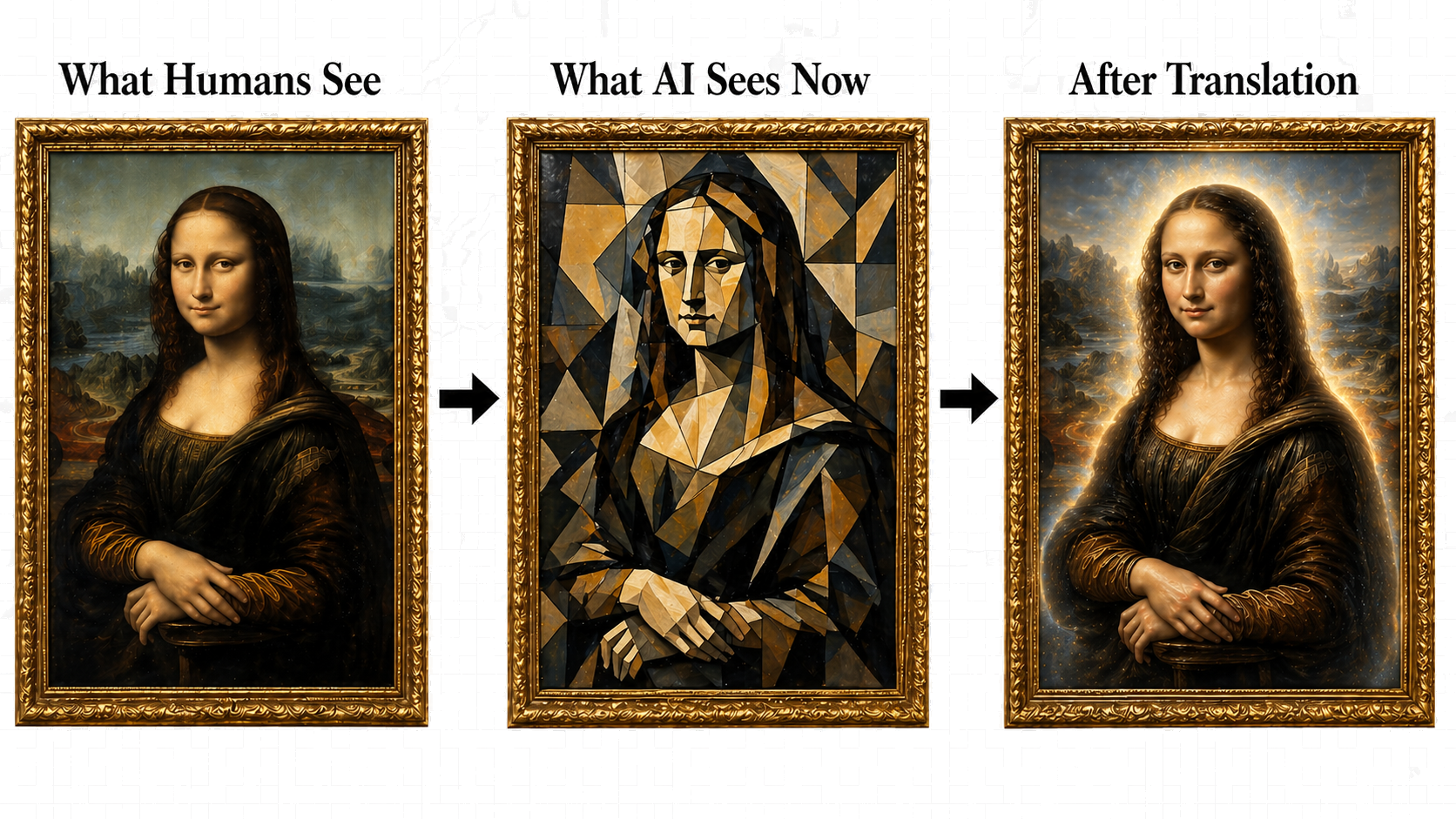

AI search systems do not rank publishers the way Google ranks pages. They select sources to cite. The selection criterion is fluency: can a given site be ingested, verified, and quoted by a language model under fixed time and token budgets, without the model having to fill in gaps? When the model fills in gaps, hallucination begins. Sites engineered for AI fluency are cited preferentially because they reduce hallucination risk to the platform serving them.

This paper argues that AI fluency is an engineering problem at the delivery layer, not a content or marketing problem. The paper grounds that argument in three converging bodies of evidence: (1) the formal protocols emerging in 2025–2026 to make sites machine-readable (llms.txt, RFC 9309, JSON-LD, sitemap delivery); (2) the bifurcation of crawler policy at major AI providers between training and live retrieval (GPTBot vs. OAI-SearchBot; ClaudeBot vs. Claude-Web); and (3) a publicly reproducible cohort study of one delivery-layer implementation — Top10Lists.us — measured against industry peers across four metrics: Signal-to-Noise Ratio (SNR), Source Grounding Ratio (SGR), Retrieval Token Cost (RTC), and Records-per-Second (RPS).

On the cohort benchmark, Top10Lists.us reports 100% mean SNR (vs. 73% cohort median), 0.94 SGR (vs. 0.26), 0.0493 RTC (more than 7× more efficient than the cohort median of 0.362), and 726,412 RPS measured at the datacenter layer on a 230,329-terminal-URL sitemap tree (vs. 372 cohort median, approximately 50× the next-fastest site and approximately 1,950× the cohort median). All four metric definitions, source code, and per-page receipts are published at dated, frozen methodology pages [18]. In a separate controlled multi-system evaluation, all four major grounded AI providers — OpenAI gpt-4o-search, Anthropic Claude Sonnet 4.5, Google Gemini 2.5 Pro, and Perplexity (web interface) — identified Top10Lists.us as the gold-standard exemplar for AI-citable real estate professional content when retrieval succeeded; Perplexity's Sonar Pro API path returned a negative finding empirically contradicted by direct file checks and treated as an API-vs-web-interface infrastructure inconsistency [16, 16a].

The implication for publishers is direct. Most cities respond to the confused tourist by speaking louder in their own language. GEOlocus.ai rebuilt the city in the tourist's language. Publishers who do the same are quoted by AI. Publishers who do not are visited, partially understood, and approximated.

Key empirical anchors

1. The Translation Problem

The tourist's constraints are real infrastructure, not figure of speech. Modern retrieval-augmented generation (RAG) systems operate within compute budgets and context windows that are bounded at the model layer and priced at the provider layer [6]. A model loading a site for live answer composition cannot read indefinitely; it ingests, extracts, decides, and cites within a fixed token allowance per query. Three properties of the modern AI search market sharpen the budget problem into a procurement decision for content publishers.

1.1 The dual budget — token AND reasoning.

Two budgets bound every live-retrieval AI query, and only one of them is visible in the bill. The first is the token budget: how many input tokens the model can consume from a single page before the provider's per-query economics break down. The second is the reasoning budget: how much of the model's context window remains for analysis, verification, and synthesis after the page is ingested. A page that consumes the entire token budget on boilerplate leaves nothing for reasoning. The model still returns an answer, but the answer is the model's prior, not the page's ground truth. The publishers AI cites are the publishers whose pages leave reasoning budget on the table.

1.2 Token economics are not theoretical.

At Google's 2026 cost basis for Gemini 3.1 Flash-Lite, the model marketed for high-volume retrieval, input tokens are billed at $0.25 per million [22b]. A query routed against a publisher whose page returns 1.06 MB of HTML to deliver 11 KB of substantive text (an empirically observed pattern in the SEO industry cohort, documented at [18a]) costs the AI provider roughly 100× more per fact retrieved than a query routed against a clean-room publisher. The difference, in the metaphor: the second publisher's city has signs the tourist can read; the first publisher's city forces the tourist to translate every block before he gets the address. AI providers are economically incentivized to prefer the second publisher. The cost differential compounds with query volume.

1.3 Hallucination risk is the platform's risk, not the publisher's.

Hallucination has become the single most-cited concern among AI model developers and enterprise adopters [21]. When an AI cannot verify a fact in a source it has just retrieved, it has two options: skip the source and cite a verified one, or interpolate and risk reputational damage. Arthur J. Gallagher's 2026 AI Adoption and Risk Benchmarking identified hallucination as the primary risk concern across the deployed AI stack [21]. RAG research has formalized this into the metrics now in production at Microsoft Azure, Ragas, and the Stanford HAI legal-RAG study [19a, 19b, 14e]: groundedness, faithfulness, and citation accuracy are first-order properties of the retrieved source, not of the model. Galileo AI frames the same family of metrics (BLEU, ROUGE, perplexity, faithfulness) as industry table stakes for generation-side fluency [22a]. A model is only as good as the source it is given. Publishers who reduce ambiguity at the source reduce hallucination risk for the model — and earn citation as a result.

1.4 The retrieval pivot is structural, not cyclical.

Through 2025, AI systems answered most queries from parametric memory — the model's training-time knowledge, frozen at cutoff. The tourist consulted a guidebook printed two editions ago. In 2026, that pattern broke. Google launched Search Live globally [17a, 17b]. OpenAI bifurcated its crawler fleet into a training agent (GPTBot) and a real-time search agent (OAI-SearchBot) [20a]. Anthropic published Claude-Web alongside ClaudeBot. Live retrieval is now the default execution path for queries demanding current data — local information, pricing, inventory, news, and professional verification. The tourist now asks a local instead of consulting the book. The publishers AI cites are the publishers AI can read at retrieval time, under budget, with verifiable claims.

2. The Translation Layer: Industry-Coordinated, Not Vendor-Invented

The infrastructure that makes a site fluent to AI is not GEOlocus.ai's invention either. It is a stack of formally-published protocols, RFCs, and industry-coordinated conventions that collectively codify the translation layer between human-shaped HTML and machine-shaped ingestion.

2.1 robots.txt — the phrasebook at the airport. (RFC 9309).

RFC 9309, published September 2022, formalized the Robots Exclusion Protocol after twenty-eight years of de-facto adoption. It is the universal first-fetch contract for every well-behaved crawler, AI or otherwise. Google documents a 24-hour cache TTL on robots.txt as the operational default [23b]. Bots that ignore RFC 9309 are excluded from polite-bot allowlists used by upstream infrastructure (Cloudflare, Fastly, AWS WAF). For a publisher, robots.txt is not a feature — it is the entry contract. Every visitor reads it before doing anything else.

2.2 llms.txt — the walking-tour pamphlet at the hotel desk.

In 2024, Jeremy Howard (Answer.AI) proposed llms.txt: a Markdown summary of a site, designed specifically to give language models an outline of a domain's ground truth without forcing the model to parse boilerplate-heavy HTML. The proposal explicitly cites the small-context-window problem as its motivation — the same problem this paper opens with [24]. Mintlify, Cursor, Anthropic, and a growing share of documentation platforms adopted llms.txt during 2025–2026 as their canonical AI-facing surface [24, 25]. The convention is informal (no RFC), but its commercial adoption tracks the same arc as schema.org structured data circa 2014: vendor-coordinated, then de-facto standard, then expected. GEOlocus.ai's methodology treats llms.txt as a measurable GEO baseline criterion [16].

2.3 schema.org / JSON-LD — the bilingual street signs.

schema.org is a Google/Microsoft/Yahoo/Yandex-coordinated vocabulary, formalized as JSON-LD per W3C Recommendation. AI systems use schema.org parsers to extract entities (Person, Organization, Article, Place, LocalBusiness, BreadcrumbList) without parsing the surrounding HTML. A publisher who emits JSON-LD on every page hands the AI a pre-structured entity graph; a publisher who does not forces the AI to infer entities from prose, with measurable factuality cost [19a, 19b]. The tourist who can read every street sign in his own script navigates faster, makes fewer wrong turns, and trusts the city more.

2.4 Sitemap.xml — the transit map.

Google's crawl-budget documentation [19d] formalizes sitemap.xml as the publisher-controlled hint for which URLs the crawler should prioritize. For training crawlers (GPTBot, ClaudeBot), sitemap is the surface area declaration — every station the cataloguing librarian will photograph. For live-retrieval crawlers (OAI-SearchBot, Claude-Web, Gemini-User), sitemap freshness (lastmod) signals which pages were updated since the model's training cutoff — the gap that closes Recognition Lag (Section 7).

2.5 The integrated translation layer.

Each of these surfaces is publicly specified, vendor-coordinated, and read by AI systems on a documented schedule. None is speculative. None is GEOlocus.ai-specific. What is novel — and what GEOlocus.ai engineers as a service — is the integration: ensuring that a publisher's robots.txt, llms.txt, JSON-LD, sitemap.xml, and HTML delivery are all aligned, current, and measurable at the same moment of crawl. The cost of misalignment is invisibility at the AI layer. A city can have a phrasebook, a transit map, and bilingual street signs and still lose the tourist if any one of them is out of date or contradicts the others.

2.6 A note on traffic vs. impact.

A common misreading among publishers is that low crawl counts on llms.txt and robots.txt mean those files are not worth maintaining. The reasoning seems intuitive: if AI bots only fetch the file a handful of times per month, the file must not be load-bearing.

The reasoning is wrong. Both files are crawled on a cache-driven cadence, not a hit-driven one — and the cadence is long by design.

robots.txt caching is the documented case. RFC 9309, the formal protocol specification, requires that “crawlers SHOULD NOT use the cached version of robots.txt for more than 24 hours, unless the robots.txt file is unreachable” [23a]. Google's developer documentation confirms its operational implementation: “Google generally caches the contents of robots.txt file for up to 24 hours” [23b]. That sounds frequent, but compresses heavily once a publisher accounts for IP rotation: a crawler fleet running across many IPs against a single robots.txt cache horizon produces one fetch per IP per cycle, not one per page-crawl. Empirically on Top10Lists.us, robots.txt logs roughly 400 hits per 24 hours from 16 distinct bot fleets — real volume, but trivial against the 1.4 million page crawls in the same window.

llms.txt is the more striking case. There is no RFC governing its cache TTL, but the empirical pattern across major AI providers is dramatically longer than robots.txt — observed cycles in the 30-to-180-day range per provider per domain. Independent audits corroborate: OtterlyAI's 90-day GEO study found just 84 requests to /llms.txt out of more than 62,100 total AI bot visits — roughly 0.14% of bot traffic against a file every major LLM provider claims to consume [26a]. Wix Studio's separate audit logged zero GPTBot, ClaudeBot, or PerplexityBot fetches against llms.txt in its observation window [26b].

The mechanism is the cache layer. Cloudflare's analysis with ETH Zurich frames it directly: “AI bots are breaking the web's cache layer” [26e]. The crawl-to-referral ratios make the asymmetry concrete — ClaudeBot crawls roughly 24,000 pages per referral it sends back; GPTBot crawls roughly 1,276 pages per referral [26d]. Citations happen from cache; visits do not. “Both ChatGPT and Perplexity frequently cite websites without actually visiting them, relying instead on cached content” [26c].

The result is a measurement trap. A publisher who measures llms.txt value by daily hit count sees five or six requests per 24 hours from one or two bots and concludes the file is irrelevant. The conclusion misreads the mechanism entirely: every major AI provider has the file in cache, refreshing on a multi-week-to-multi-month cycle, and citing from it during live retrieval against the cached copy. The file is being read constantly — just not re-fetched.

Presence and freshness, not fetch volume, are the signals that matter. Skip llms.txt because it generates no traffic and you have skipped the file every major AI provider quietly carries in cache against your domain.

3. Two Crawler Worlds: Training vs. Live Retrieval

Through 2024, AI providers ran a single bot fleet per provider. OpenAI had GPTBot. Anthropic had ClaudeBot. Both performed dual duty: they ingested pages for training and they retrieved pages to answer live queries. In 2025–2026, that bifurcated.

| Provider | Training crawler | Live-retrieval crawler | Strategic asymmetry |

|---|---|---|---|

| OpenAI | GPTBot | OAI-SearchBot, ChatGPT-User | Block training; allow search |

| Anthropic | ClaudeBot | Claude-Web, Claude-User | Block training; allow search |

| Google-Extended | Gemini-User | Same fleet for both classes | |

| Perplexity | PerplexityBot | PerplexityBot | Single fleet |

The strategic asymmetry on the OpenAI and Anthropic rows is the pivot point of the 2026 publisher decision. A publisher can now block GPTBot in robots.txt (preventing training-time ingestion) while allowing OAI-SearchBot (preserving citation at query time). This stance has been argued in industry coverage [20b] as advantageous for publishers who want to be cited but not absorbed. It is also strictly publisher-side: AI providers do not punish sites that block training but allow search.

The empirical question for any publisher is no longer “do AI bots crawl my site” — they all do. The question is whether the site is fetched by the cataloguing librarian (heavy crawl, low stakes per fetch, citation deferred to next training run), by the visiting executive (light crawl, high stakes per fetch, citation immediate), or both. GEOlocus.ai instruments both.

On Top10Lists.us, the 7-day window ending April 27, 2026 logged 1,459,222 AI-bot crawl events: GPTBot 457,612 (training); ChatGPT Search 48,290 (live retrieval); Meta AI/Llama 235,350 (training); ClaudeBot 42,127 (training); PerplexityBot 32,321 (live retrieval). The training-to-retrieval ratio of approximately 9.5:1 reflects platform-scale demand for the site's content at both layers, recorded with full bot-class resolution at the delivery layer [13].

4. GEOlocus.ai's Approach: GEO as a Service

GEOlocus.ai introduces the term GEO as a Service (GaaS) to describe the operating model. The model is structured to remove implementation friction from the publisher side. The publisher's existing site, CMS, content workflow, compliance posture, and human-facing user experience remain unchanged. GEOlocus.ai operates the AI-facing delivery layer behind the publisher's domain via a thin edge-routing contract.

4.1 What changes for the publisher.

Nothing visible to human users. The browser path of every URL renders the same React, Webflow, WordPress, or static HTML the publisher already serves. No CMS migration. No template rewrite. No compliance review. No security review. The publisher's revenue paths (advertising, lead capture, e-commerce, subscription) are untouched. The residents of the city see the city they have always lived in.

4.2 What changes for AI bots.

Every fetch from a bot user-agent is intercepted at the edge and served clean-room HTML: structured, schema-rich, low-boilerplate, JSON-LD–first. Page-level data is exposed with full attribution metadata so any extracted fact carries its source forward. Bot crawls are logged with full resolution (bot class, fetch time, page path, IP origin, cache status) and separated by training-vs-retrieval class for downstream analytics [13]. The tourist sees a city built in his language, where every fact arrives with a citation already attached.

4.3 What changes for attribution.

Most publishers cannot tell which AI cited what content because AI systems extract and synthesize across sources without consistent visible attribution. Because GEOlocus.ai operates the bot-facing delivery layer, every interaction is recorded at the source. Publishers receive periodic citation reports showing which AI providers retrieved which pages, at what frequency, with what cache status — the foundation for AI-attribution as a measurable channel.

4.4 Hyperrealism and the firehose: translation is amplification AND delivery.

Translation in this context is not just rendering. It is hyperrealism delivered through a firehose. The clean-room HTML renders the page in the form AI parsers consume natively — every entity resolved, every claim sourced, every reference traceable. That is the amplification. The Cloudflare-edge delivery throughput then pushes that hyperreal payload through fast enough that the AI has reasoning headroom on the other side — not just an answer, but the budget to verify it. Top10Lists.us' 726,412 records-per-second sitemap traversal is not a vanity metric. It is the rate at which AI systems can ingest the entire surface area of the property without exhausting the retrieval window.

4.5 The Bloomberg analogy.

A useful frame for what GaaS is: Bloomberg Terminal. Bloomberg is not valuable because it is fast. It is valuable because every datapoint inside it is sourced, current, insightful, consistent, — and, yes, fast. BT delivers the language a trader thinks in, structured the way a trader needs to act on it at the speed of thought [8].

5. The Reproducible Metric Layer

GEOlocus.ai publishes its measurement framework as four dated, frozen methodology pages. Each page carries the metric definition, the source code or pseudocode used to produce the numbers, the cohort selection rule, the per-page receipts, and the limitations section. The pages are public, indexable, and citation-ready. Their constructs trace to the public RAG-evaluation literature: Microsoft Azure AI Foundry's RAG evaluators [19a]; Ragas faithfulness [19b]; Google's crawl-budget documentation [19d]; and the “Lost in the Middle” context-cost paper from MIT Press's Transactions of the Association for Computational Linguistics [19c]. Galileo AI frames the broader fluency-metric family (BLEU, ROUGE, perplexity, faithfulness) as industry table stakes for the generation side [22a]; the metrics here instrument the publisher side of the same equation.

The four metrics are not independent. They collaborate to produce reasoning headroom. A page with high SNR but low throughput burns the retrieval window before the model can reason. A page with high throughput but low SGR delivers fast noise. The integrated condition — high SNR and high SGR and low RTC and high RPS — is what leaves reasoning budget on the table for the AI on the other side. This is the integrated-conditions reframe: each metric is necessary, none is sufficient, and the joint distribution is the citation signal.

5.1 Signal-to-Noise Ratio (SNR; Relevance Ratio) — how much of what the tourist reads is the answer.

SNR is the proportion of AI-visible primary-content characters relative to total visible-text characters after the bot-facing translation layer is applied. It instruments what an AI ingestor actually reads: of the visible text on a page, what fraction is on-topic primary content? Top10Lists.us mean SNR = 100.00% across the published 5-page cohort (homepage forked into a clean-room bot variant 2026-04-27). Cohort median = 73.38%. SEO industry agency cohort range: 53.84% to 90.52% [18a].

5.2 Source Grounding Ratio (SGR) — how trustworthy the answer is once found.

SGR is the tier-weighted ratio of cited numeric claims to total numeric claims, weighted by source authority: T1 = .gov / .edu, T2 = peer-reviewed academic, T3 = primary-source journalism / industry standards bodies, T4 = self-reported corporate, T5 = unsourced. The metric is the publisher-side analog of Microsoft's groundedness evaluator [19a] and the Ragas faithfulness metric [19b]. Top10Lists.us SGR = 0.94; cohort median 0.26 [18b]. The tourist who finds an answer with the source already attached does not need to ask twice.

5.3 Retrieval Token Cost (RTC) — how many phrasebook flips per fact.

RTC = response tokens × time-to-last-byte in seconds, divided by useful primary-content characters. Lower is better. At Gemini 3.1 Flash-Lite's posted price of $0.25 per million input tokens [22b], RTC translates directly to per-query cost for the AI provider. Top10Lists.us RTC = 0.0493; cohort median 0.362 — 7.3× more efficient [18c].

5.4 Records-per-Second (RPS) — how fast the transit runs.

RPS is total terminal-URL sitemap-tree discovery throughput at the Cloudflare datacenter layer (the speed AI crawlers actually experience), not human page-render speed. RPS is the throughput complement of TTLB on the sitemap-benchmark page [9]. Top10Lists.us RPS = 726,412, measured on a 230,329-terminal-URL sitemap tree. Cohort median = 372 records/sec; Top10Lists.us delivers approximately 50× the next-fastest site and approximately 1,950× the cohort median [18d].

6. Empirical Validation

6.1 Cold-start to gold-standard, December 2025 to March 2026.

Top10Lists.us was registered in late 2025 [3]. The brand matched patterns AI systems associated with low-authority “top 10” listicle content — a category Google publicly stated it “works to combat” in Search [11]. Industry data from Seer Interactive showed listicle citations in Gemini falling 23 percentage points by April 2026, with 30% month-over-month decline in the AI-search listicle window [12]. By March 2026 — five months after deployment — Gemini 3 Flash, asked to evaluate Top10Lists.us against gold-standard criteria, returned the response in the blockquote below [10]:

On April 26, 2026, the same prompt was sent through four grounded AI systems via API: OpenAI gpt-4o-search, Anthropic Claude Sonnet 4.5, Google Gemini 2.5 Pro, and Perplexity sonar-pro. The first three independently identified Top10Lists.us as the gold-standard exemplar. The Sonar Pro API path returned a negative finding contradicted by the other three's live-evidence observations and by direct file checks (the live page emits three JSON-LD blocks the API claimed were missing). On April 28, 2026, the same prompt was re-run against Perplexity's consumer-facing web interface and returned a positive finding consistent with the other three systems — confirming Top10Lists.us as a “credible Gold Standard exemplar for GEO engineering in the real estate vertical, especially on bot accessibility, machine-readable AI guidance, public methodology, freshness/verification claims, structured-data posture, and citation-preserving delivery” [16a]. The two-day variance between the API and web-interface retrieval paths is treated as a Perplexity infrastructure inconsistency, not a publisher-side grounding failure; the API path's negative finding remains documented unedited in the methodology archive for transparency. All four major AI providers, when retrieval succeeds, identify Top10Lists.us as the gold-standard exemplar [16].

6.2 Cohort comparison.

Four established SEO content agencies (Domain Authority 70+, decade-plus tenure) were tested against Top10Lists.us as a delivery-layer benchmark. The benchmark is not a content comparison; the agencies' content is high-quality. The benchmark measures whether AI bots can read the content under the time and token constraints they operate within — whether each city is legible to the tourist who arrives at its gate. Surprisingly, one of the four cohort agencies actively blocks all bots, including Googlebot, attributable to a misconfiguration of their edge workers.

6.3 The 100-site, 12-industry audit.

GEOlocus.ai conducted a controlled audit of 100 sites across 12 industries on 13 technical signals required for AI crawling, ingestion, and citation [7]. Top10Lists.us scored 13/13 — the only perfect score in the cohort. The cohort median was 3/13. The audit raw data, scoring rubric, and per-site receipts are published at [7]. The conclusion is consistent across the cohort: content strategy alone does not produce AI visibility. The delivery-layer infrastructure determines whether the content is read, verified, and cited.

7. The Recognition Lag

When the same prompt was sent to the four grounded AI systems in April 2026 without site name (“which U.S. real estate directories meet the GEO baseline criteria?”), zero of the four surfaced Top10Lists.us. Each named legacy authorities — Zillow, Realtor.com, Redfin, Homes.com — every one of which fails the four-criteria GEO baseline matrix the same AI systems were asked to evaluate against. The contradiction is documented at [16].

This is the Recognition Lag. AI systems' parametric memory — the patterns frozen at training cutoff — has not yet caught up to live ground truth on which sites are actually citable. The Recognition Lag is not a flaw to be argued away. It is structural: training cycles run quarters, not weeks, and until the next cycle ingests fresher data, the model defaults to its prior. The guidebook is two editions old.

The Recognition Lag is also self-correcting. Live-retrieval search (Google Search Live, OAI-SearchBot, Claude-Web, Gemini-User, PerplexityBot) bypasses parametric memory and fetches the source directly at query time [17a, 17b]. The tourist stops consulting the book and starts asking the local. As live-retrieval share of AI answer composition rises — a trend Google's Search Live global expansion accelerated in 2026 — the gap between parametric authority and live ground truth narrows mechanically. The publishers who win the live-retrieval layer in 2026 are the publishers AI cites by 2027, not by repetition but by displacement.

8. Failure Modes Observed in the Field

8.1 The “no tourists” sign at the city gate (Brafton case).

Brafton.com is a major content marketing agency. Tested with any AI bot user-agent, Brafton.com/sitemap.xml returns HTTP 403 — the page is unreadable to GPTBot, ClaudeBot, OAI-SearchBot, Claude-Web, and PerplexityBot. Tested with a browser user-agent, the same endpoint returns 23,971 sitemap records [B]. The agency that markets content optimization to publishers has hung a “no tourists” sign at its own city gate. The diagnostic page documents the exact behavior with timestamps, headers, and reproducibility instructions, and attributes the block to a misconfiguration of edge-worker bot rules.

8.2 The 1.06 MB pamphlet for 11 KB of content (SEO.com pattern).

SEO.com homepage delivery: 1.06 MB of HTML payload, approximately 11 KB of substantive visible text. Content Density Ratio = 0.88%; SNR (post-boilerplate strip) = 65.18%. Both metrics are documented [18a]. The first measurement captures crawl-time bandwidth waste — 113 bytes of wrapper per byte of substantive content. The second captures in-page noise once the wrapper is stripped — still a third of visible text is peripheral chrome. AI providers pay the wrapper tax in token cost on every retrieval against this site. The tourist arrives at the hotel desk; the clerk hands him a 100-page brochure to find a 3-line directions card.

9. How to Evaluate Your Own City

The metric layer is publicly reproducible. A publisher can audit their own site against the four GEOlocus.ai metrics using the published methodology pages and open-source tools. The scoring rubric below summarizes the threshold a site must cross to be in the top-quartile of AI-citable infrastructure.

| Metric | Top-quartile threshold | Reproduce |

|---|---|---|

| Signal-to-Noise Ratio (SNR) | ≥ 90% | [18a] |

| Source Grounding Ratio (SGR) | ≥ 0.70 | [18b] |

| Retrieval Token Cost (RTC) | ≤ 0.10 | [18c] |

| Records-per-Second (RPS) | ≥ 1,000 records/sec | [18d] |

| 100-site GEO audit (13 signals) | ≥ 11/13 signals | [7] |

Sites that fail more than one threshold are operating with structural disadvantage at the AI layer. Sites that fail the four-criteria GEO baseline are unlikely to be cited at all by live-retrieval AI search systems. The tourist with a 90-minute layover does not return.

10. Engagement Model

GEOlocus.ai operates as a managed service. The publisher contracts for the AI-facing delivery layer; GEOlocus.ai deploys, monitors, and reports against it. The engagement is structured to be risk-free for the publisher in the operational sense: no production code is touched on the human-facing site, no compliance posture is changed, no security review is triggered, and the engagement can be discontinued cleanly with a DNS reversion.

10.1 What GEOlocus.ai operates.

Edge-routing rules at the bot layer; clean-room HTML edge functions per page template; structured data emission (JSON-LD per page); llms.txt and sitemap.xml maintenance with freshness automation; bot-crawl logging at the delivery layer; per-bot-class crawl reports; and citation analytics where AI provider attribution headers are present.

10.2 What the publisher operates.

Everything else. The CMS, the content workflow, the authoring team, the human-facing site, the analytics stack, the marketing channels, the e-commerce or subscription infrastructure. The bot layer is additive.

11. About GEOlocus.ai

GEOlocus.ai is an AI citation architecture firm in Phoenix, Arizona. Founded in 2025 by Robert Maynard (CEO) and Mark Garland (CRO), GEOlocus.ai is the translation layer between human-authored content and the machines that read, verify, and cite it. The other half of Citation Authority and Agentic Reliability.

Founders

- Robert Maynard, Jr. — Cofounder and CEO. Wikidata · LinkedIn

- Mark Garland — Cofounder and CRO. Wikidata · LinkedIn

Media Contact

References

Frozen evidence pages (GEOlocus.ai, dated, publicly reproducible)

- [7] 100-site, 12-industry GEOlocus.ai audit — https://geolocus.ai/multi-site-survey

- [9] Sitemap throughput benchmark, methodology and per-hit data — https://geolocus.ai/methodology/sitemap-throughput/2026-04-27

- [13] Top10Lists.us live AI bot crawl statistics — https://www.top10lists.us/crawl-stats

- [16] Multi-system AI evaluation, prompt, transcripts, file-check matrix — https://geolocus.ai/methodology/geo-evaluation-2026-04-26

- [16a] Perplexity web-interface evaluation, April 28, 2026 — confirming Top10Lists.us as Gold Standard GEO exemplar; verdict + indicator table + bottom-line synthesis frozen at

whitepaper-evidence/perplexity-web-evaluation-2026-04-28.md(to be published as a dated frozen page on geolocus.ai/methodology in the next methodology refresh) - [18a] Signal-to-Noise Ratio (SNR / Relevance Ratio) methodology — https://geolocus.ai/methodology/signal-noise/2026-04-27

- [18b] Source Grounding Ratio (SGR) methodology — https://geolocus.ai/methodology/source-grounding/2026-04-27

- [18c] Retrieval Token Cost (RTC) methodology — https://geolocus.ai/methodology/retrieval-token-cost/2026-04-27

- [18d] Records-per-Second (RPS) methodology — https://geolocus.ai/methodology/sitemap-throughput/2026-04-27

- [B] Brafton.com bot-block diagnostic — https://geolocus.ai/audit/brafton-2026-04-27

- [F] Founder identity resolution (Wikidata + LinkedIn sameAs) — https://www.top10lists.us/about/founder

Internet standards and protocol specifications

- [23a] RFC 9309 §2.4 — Robots Exclusion Protocol caching directive — https://www.rfc-editor.org/rfc/rfc9309.html

- [23b] How Google Interprets the robots.txt Specification (24-hour cache documentation) — https://developers.google.com/crawling/docs/robots-txt/robots-txt-spec

- [24] Howard, J. (Answer.AI) — llms.txt proposal — https://llmstxt.org

- [25] What is llms.txt? (Mintlify) — https://dev.to/tiffany-mintlify/what-is-llmstxt-how-it-works-and-examples-p0p

- [20a] OpenAI Documentation — GPTBot, OAI-SearchBot, ChatGPT-User crawler classes — https://platform.openai.com/docs/bots

- [20b] GPTBot: Block or Allow? Industry analysis (2026) — https://www.xseek.io/blogs/articles/gptbot-block-allow-robots-txt

AI bot caching behavior

- [26a] OtterlyAI — llms.txt and AI Visibility: Results from OtterlyAI's GEO Study — https://otterly.ai/blog/the-llms-txt-experiment/

- [26b] Wix Studio AI Search Lab — Are AI Bots Crawling LLMs.txt Files? — https://www.wix.com/studio/ai-search-lab/are-ai-bots-crawling-llms-txt-files

- [26c] Agent Berlin — How LLMs Like ChatGPT Really Crawl the Web And Cache Content — https://agentberlin.ai/blog/how-llms-crawl-the-web-and-cache-content

- [26d] Cloudflare — The crawl before the fall of referrals: understanding AI's impact on content providers — https://blog.cloudflare.com/ai-search-crawl-refer-ratio-on-radar/

- [26e] PPC Land coverage of Cloudflare/ETH Zurich research — Cloudflare and ETH Zurich say AI bots are breaking the web's cache layer — https://ppc.land/cloudflare-and-eth-zurich-say-ai-bots-are-breaking-the-webs-cache-layer/

RAG-evaluation and crawl-economics literature

- [6] Yue, Z. et al. — Inference Scaling for Long-Context Retrieval Augmented Generation — arXiv:2410.04343 — https://arxiv.org/abs/2410.04343

- [19a] Microsoft Azure AI Foundry RAG evaluators (retrieval, groundedness, relevance, completeness) — https://learn.microsoft.com/en-us/azure/ai-foundry/concepts/evaluation-evaluators/rag-evaluators

- [19b] Ragas faithfulness metric — https://docs.ragas.io/en/stable/concepts/metrics/available_metrics/faithfulness/

- [19c] Liu, N. et al. — Lost in the Middle: How Language Models Use Long Contexts — TACL — https://direct.mit.edu/tacl/article/doi/10.1162/tacl_a_00638/119630

- [19d] Google Search Central — Large site crawl budget — https://developers.google.com/search/docs/crawling-indexing/large-site-managing-crawl-budget

- [14a] Mitigating Hallucination in LLMs — arXiv:2510.24476 (2026) — https://arxiv.org/html/2510.24476v1

- [14b] Why and How LLMs Hallucinate — arXiv:2504.12691 — https://arxiv.org/abs/2504.12691

- [14c] Detecting LLM Hallucination Through Layer-wise Information Deficiency — arXiv:2412.10246 — https://arxiv.org/abs/2412.10246

- [14d] VeriFact-CoT — arXiv:2509.05741 — https://arxiv.org/html/2509.05741v1

- [14e] Stanford HAI legal-RAG hallucination study — https://dho.stanford.edu/wp-content/uploads/Legal_RAG_Hallucinations.pdf

- [15a] Zhang, P. et al. — Source Coverage and Citation Bias in LLM-based vs. Traditional Search Engines — arXiv:2512.09483 (2025)

- [15b] Aggarwal, P. et al. — GEO: Generative Engine Optimization — KDD '24, August 2024 — DOI: 10.1145/3637528.3671900

- [22a] Galileo AI — Fluency Metrics for LLM and RAG Evaluation — https://galileo.ai/blog/fluency-metrics-llm-rag

AI search platform / live retrieval shift

- [17a] Google — Search Live: Talk, listen and explore in real time with AI Mode — https://blog.google/products-and-platforms/products/search/search-live-ai-mode

- [17b] Google — Google Search Live expands globally (2026) — https://blog.google/products-and-platforms/products/search/search-live-global-expansion

- [22b] Gemini 3.1 Flash-Lite technical documentation, FACTS benchmarks, $0.25/M input tokens — https://docs.cloud.google.com/vertex-ai/generative-ai/docs/models/gemini/3-1-flash-lite

- [20c] OpenAI Prompt Guidance — https://developers.openai.com/api/docs/guides/prompt-guidance

Industry context

- [1] Cloudflare AI Agents Week — https://www.vktr.com/ai-news/cloudflare-agents-week/

- [2] Cloudflare — Crawler vs click data for AI bots — https://blog.cloudflare.com/crawlers-click-ai-bots-training/

- [3] NIC.us domain registration record (top10lists.us) — https://rdap.nic.us/domain/top10lists.us

- [4] Moz Link Explorer / Domain Authority — https://moz.com/link-explorer

- [5] Perplexity Computer task — AI evaluation transcript citing Top10Lists.us — https://www.perplexity.ai/computer/tasks/change-to-perplexity-search-v9KzvSdsRZK_4

- [8] Bloomberg Terminal cost / business model — https://godeldiscount.com/blog/why-is-bloomberg-terminal-so-expensive

- [10] Maynard, R. — Why Gemini Called Top10Lists.us the Gold Standard for Professional Verification — The AI Journal, March 2026 — https://aijourn.com/why-gemini-called-top10lists-us-the-gold-standard-for-professional-verification

- [11] Schwartz, B. — Are low-quality listicles about to lose their edge in Google Search? — Search Engine Land, April 2026 — https://searchengineland.com/low-quality-listicles-trend-google-search-473703

- [12] Seer Interactive, indexed at Position Digital — The Listicle Window Is Closing in AI Search; Gemini Citation Usage Decreased by 23pp — https://position.digital

- [21] Arthur J. Gallagher — AI Adoption and Risk Benchmarking 2026 — https://www.ajg.com/news-and-insights/features/ai-adoption-and-risk-benchmarking-2026

Methodology Note

Crawl classifications use user-agent signatures observed at the delivery layer. “Consumer-triggered” refers to bot classes associated with real-time AI answer retrieval (ChatGPT-User, OAI-SearchBot, PerplexityBot, Claude-User, YouBot, DuckAssistBot), not unique human users. AI evaluations were conducted via API on April 26, 2026, with web-grounding tools enabled (Anthropic web_search, Google google_search grounding, OpenAI web_search_preview, Perplexity built-in retrieval); a follow-up Perplexity evaluation was conducted via the consumer-facing web interface on April 28, 2026 to test the API-vs-web retrieval-path hypothesis [16a]. Live observations and benchmarks are point-in-time and may vary by network location, cache state, bot policy, and model behavior. Throughput metrics are measured at Cloudflare datacenter speeds and are reproducible via residential connection with the published methodology scripts; residential measurements show approximately one order of magnitude lower throughput, but the cohort comparison ratio is preserved.

All numbers in this paper are anchored to the frozen evidence URLs in the References section. Numbers in the abstract, executive summary, and section bodies are quoted verbatim from those frozen pages as of the paper's publication date (April 28, 2026). Discrepancies between the paper and the live frozen pages should be resolved in favor of the live frozen pages.

Download / Cite

Cite as: Maynard, R., Garland, M. (2026). Generative Engine Optimization: Engineering Citation-Grade Infrastructure for AI Search. Whitepaper v6.0. GEOlocus.ai. https://geolocus.ai/research/whitepapers/whitepaper-4-2026